After Italy became the first Western country to block advanced chatbot ChatGPT on Friday over lack of transparency in its use of data, the question around Europe is: Who will follow?

The decision has already inspired various neighboring countries.

“In the space of a few days, specialists from all over the world and a country, Italy, are trying to slow down the meteoric progression of this technology, which is as prodigious as it is worrying,” writes the French daily Le Parisien.

Various cities in France already have started their own research “to assess the changes brought about by ChatGPT and the consequences of its use in the context of local action,” reports Ouest-France.

The city of Montpellier wants to ban ChatGPT for municipal staff, as a precaution,” the paper reports. “The ChatGPT software should be banned within municipal teams considering that its use could be detrimental.”

The Irish data protection commission, according to the BBC, is following up with the Italian regulator to understand the basis for its action and “will coordinate with all E.U. (European Union) data protection authorities” in connection with the ban.

Also the Information Commissioner’s Office, the U.K.’s independent data regulator, told the BBC that it would “support” developments in AI but that it was also ready to “challenge non-compliance” with data protection laws.

Related

ChatGPT is already blocked in various countries like China, Iran, North Korea and Russia.

The E.U. is in the process of preparing the Artificial Intelligence Act, legislation “to define which AIs are likely to have societal consequences,” explains Le Parisien. “This future law should in particular make it possible to fight against the racist or misogynistic biases of generative artificial intelligence algorithms and software (such as ChatGPT).”

The Artificial Intelligence Act also contemplates appointing one regulator per country in charge of artificial intelligence.

The Italian case

The Italian data-protection authority explained that it was banning and investigating ChatGPT due to privacy concerns relating to the model, which was created by U.S. start-up OpenAI, a company backed by billions of dollars in investment from Microsoft.

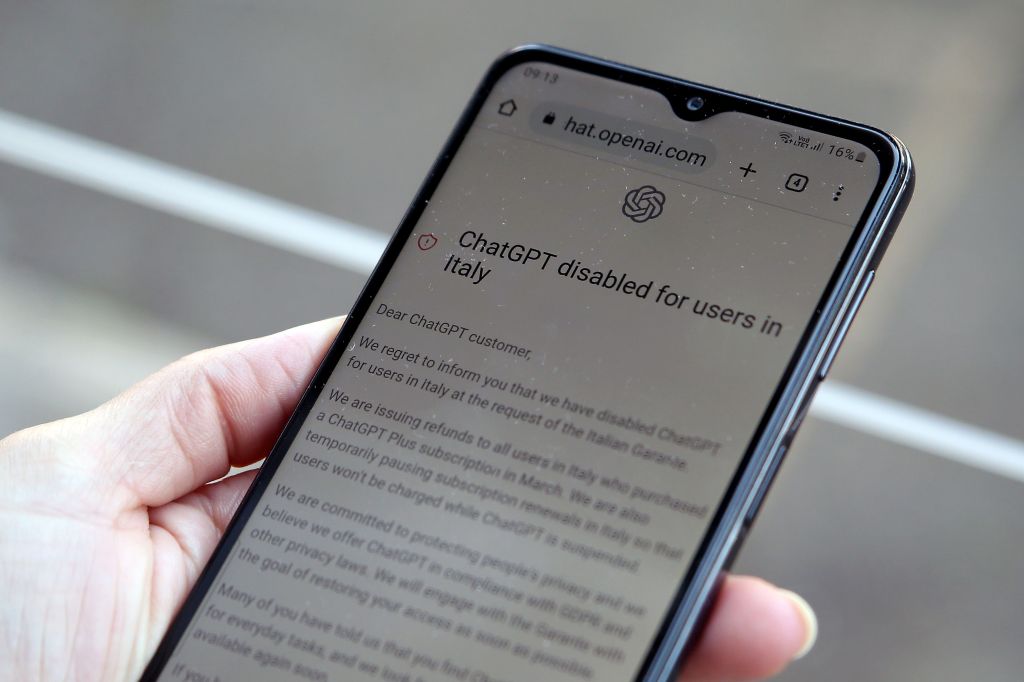

The decision “with immediate effect” announced by the Italian National Authority for the Protection of Personal data was taken because “the ChatGPT robot is not respecting the legislation on personal data and does not have a system to verify the age of minor users,” Le Point reported.

“The move by the agency, which is independent from the government, made Italy the first Western country to take action against a chatbot powered by artificial intelligence,” wrote Reuters .

The Italian watchdog said that not only would it block OpenAI’s chatbot but it would also investigate whether it complied with the E.U.’s General Data Protection Regulation.

Related

Protecting minors

It adds that the new technology “exposes minors to absolutely unsuitable answers compared to their degree of development and awareness.”

The Italian Authority’s press release further explains that on March 20, ChatGPT “suffered a loss of data (‘data breach’) concerning the conversations of users and information relating to the payment of subscribers to the paid service.”

It also points to “the absence of a legal basis justifying the mass collection and storage of personal data for the purpose of ‘training’ the algorithms underlying the operation of the platform.”

ChatGPT was unveiled to the public in November and was quickly taken up by millions of users impressed with its ability to clearly answer difficult questions, mimic writing styles, write sonnets and papers and even pass exams. ChatGPT can also be used to write computer code without having the technical knowledge.

The craze around ChatGPT

“Since its release last year, ChatGPT has set off a tech craze, prompting rivals to launch similar products and companies to integrate it or similar technologies into their apps and products,” writes Reuters.

“OpenAI, which disabled ChatGPT for users in Italy on the back of the agency’s request, said on Friday it actively works to reduce the use of personal data in training its AI systems like ChatGPT.”

The Italian watchdog is now asking OpenAI to “communicate within 20 days the measures undertaken” to remedy this situation — or risk a fine of €20 million ($21.7m) or up to 4% of annual worldwide turnover, according to Euronews.

The announcement comes as the European police agency Europol warned on Monday that criminals were ready to take advantage of AI chatbots such as ChatGPT to commit fraud and other cybercrimes.

From phishing to misinformation and malware, the rapidly evolving capabilities of chatbots are likely to be quickly exploited by those with malicious intent, Europol warned in a report.

This article was first published on forbes.com