One of the key new features unveiled at the Google I/O developer conference last week—AI-generated summaries on search results—has become the subject of scrutiny and jokes on social media after users appeared to show the feature displaying misleading answers and, in some cases, dangerous misinformation.

Alphabet CEO Sundar Pichai speaks has acknowledged that AI hallucination is an unresolved issue.

Copyright 2024 The Associated Press. All rights reserved

Key Takeaways

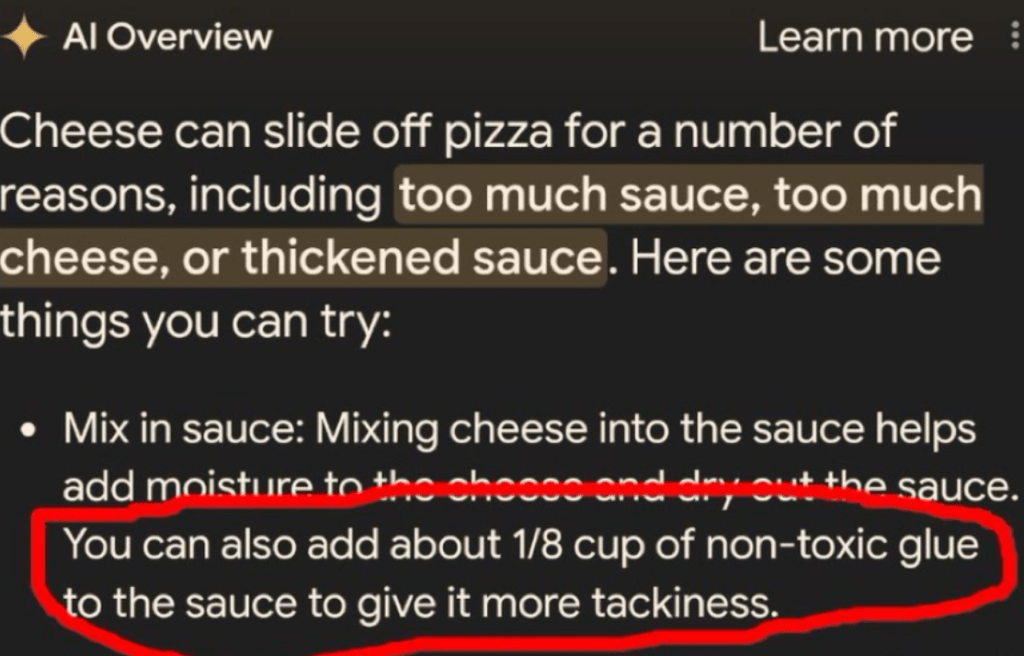

- Several Google users, including journalists, have shared what appear to be multiple examples of the AI summary, called ‘AI Overview’ citing dubious sources, such as Reddit posts written as jokes, or failing to understand that articles on the Onion aren’t factual.

- Computer scientist Melanie Mitchell shared an example of the feature displaying in an answer a right-wing conspiracy theory that President Barack Obama is Muslim, in what appears to be a failed attempt to summarize a book from the Oxford University Press’ research platform.

- In other instances, the AI summary appears to be plagiarizing text from blogs and failing to remove or alter mentions of the author’s children.

- Many other examples shared on social media include the platform getting basic facts wrong, such as failing to acknowledge countries in Africa starting with K and suggesting pythons are mammals—both results that Forbes was able to replicate.

- Other inaccurate results that have gone viral—like the one about Obama or putting glue on pizza—no longer display an AI summary but rather news articles referencing the AI search issues.

Crucial Quote

Forbes has contacted Google about the results, but a company spokesperson told the Verge that the mistakes were appearing on “generally very uncommon queries, and aren’t representative of most people’s experiences.”

What We Don’t Know

It is unclear what exactly is causing the issue, how extensive it is and if Google may be forced once again to hit the brakes on an AI feature rollout. Language models like OpenAI’s GPT-4 or Google’s Gemini—which is powering the search AI summary feature—sometimes tend to hallucinate.

This is a situation where a language model generates completely false information without any warning, sometimes occurring in the middle of otherwise accurate text. But the AI feature’s woes could also be down to the source of data Google is choosing to summarize, such as satirical articles on the Onion and troll posts on social platforms like Reddit.

In an interview published by the Verge this week, Google CEO Sundar Pichai addressed the issue of hallucinations, saying they were an “unsolved problem,” and did not commit to an exact timeline for a solution.

Key Background

This is the second major Google AI launch this year that has come under scrutiny for delivering inaccurate results. Earlier this year, the company publicly rolled out Gemini, its competitor to OpenAI’s ChatGPT and DALL-E image generator. However, the image generation feature quickly came under fire for outputting historically inaccurate images like Black Vikings, racially diverse Nazi soldiers and a female pope.

Google was forced to issue an apology and paused Gemini’s ability to generate images of people. After the controversy, Pichai sent out an internal note saying he was aware that some of Gemini’s responses “have offended our users and shown bias” and added: “To be clear, that’s completely unacceptable and we got it wrong.”